In deep neural network, the most frequently used component may be the linear layer. However, the linear layer itself does not work well as a classifier. In this article, I intend to explain why from my own point of view.

A linear layer is a linear function. Just as in the following form: $$F(A) = A (\vec{x})$$, where $A$ is a matrix, $\vec{x}$ is the input vector. The training process of a DNN is to find an optimal $A$ to maximize an objective function. In this process, $\frac{\partial{F}}{\partial{A}}$ is computed, and it is used to update $A$. To view $\frac{\partial{F}}{\partial{A}}$, we can rewrite $F$ as $$

F'(A)=M vec(A)

$$, where $vec(A)$ is the vectorized $A$, and $M$ is a corresponding matrix which contains suitably distributed entries from $\vec{x}$ so that $$

F(A) = F'(vec(A)) = M vec(A)

$$.

Now, it is clear that $$

\frac{\partial{F}}{\partial{A}} = \frac{\partial{F’}}{\partial{vec(A)}} = M

$$. When an optimizer uses a multiple of $M$ to update $A$ as in a gradient descending algorithm, $A \leftarrow \vec{\Lambda} M$, where $\vec{\Lambda}$ is some vector that summarizes backpropagation of gradients from previous layers. Hence, it is clear that $A$ eventually only changes along some vector in the column space of $M$. When the optimal $A$ is not in the column space of $M$, no matter how $A$ is updated, the optimal point will never be reached.

The above findings can help us improve design of even simple networks. For example, assume a layer $G(x) = A \vec{x}$, where $A$ is a $2 \times m$ matrix. When $\vec{x}$s are almost colinear, $A$ will only move along the $\vec{x}$s. Hence, it is quite difficult to achieve an effect like switching two entries of $A$.

To solve the problem, the effective dimension of $\vec{x}$s must be increased, either directly by adding more various samples, or indirectly by expanding the model to multiple layers. For example, define $$

F(\vec{x}) = A v( B \vec{x} )

$$, where $v$ is some activation function. This is in fact two linear layers. $B \vec{x}$ first expands $\vec{x}$ into more various values, then $v$ introduces more linear-independency, before right-multiplying with $A$. With this result, Relu is clearly a good choice as an activation function, because it behaves far from a linear function, therefore, produces higher dimensions. Then, the high dimension makes columns of A combine with more variations.

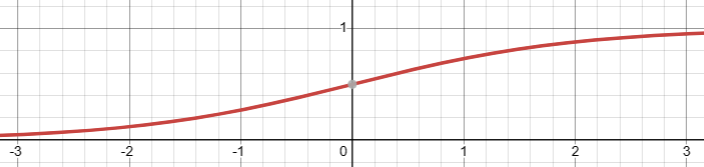

On the contrary, if $v$ is a sigmoid function, it changes smoothly everywhere, and behaves closely to a linear function at the origin. Hence, its ability of adding dimension is low, as compared to Relu.

That is all! ……Wait! Not all!

There is still a problem. The nonlinearity of Relu only occurs at the 0 point. But if the input to Relu is far from 0, then Relu will be virtually linear, hence lose the merits of Relu. This is where a good initialization needed. A good initialization will cover both + and – side of a Relu input for every input entry if the layer is large enough, hence will give enough dimensions for the column space of $B$. However, if $B$ is too large, it will be wasteful. A natural question is at least how large a layer must be. Support the input $\vec{x}$ has $p$ entries. Then to give chance to each of the entries to be passed and blocked by the Relu function, there must be at least two entries of $B$. So, there must be $2p$ entries in each row of B. Still, this does not guarantee that each $x$ is both passed and blocked in every row of $B$, because an initialization process is very likely a random process, it is only probable that the initialization goes perfectly. Hence, adding more than $2p$ entries can also be practical. Since then, $A$ can give enough combinations that can cover the whole space of $F$.

In addition, to avoid overfitting, dropout has been the de facto method. If the dropout ratio is $d$, then $B$ must have at least $2p/d$ entries.

Conclusion: A least practical DNN need have the form $F(\vec{x}) = A \circ B \circ \vec{x}$, $B$ should be at least double number of columns of $\vec{x}$.